What's wrong with FON - a hypothesis?

As we know Find Out Now (FON) relies on a fundamentally different recruitment base from traditional pollsters like YouGov. Their respondents are drawn from daily users of Pick My Postcode, a free online lottery site. Users register, return every day for prize draws, and are offered an optional quick survey question before seeing the winner. This creates a highly self-selecting pool of roughly 100,000 daily participants from a couple of million registered users.

Now obviously FON applies demographic quotas and weighting to match census figures (age, gender, region, social grade ABC1/C2DE, and past vote) however I would argue that the initial sample is 'infected' with behaviour bias hat weighting cannot correct.

The core issue is selection on psychological and behavioural traits. People who habitually check a free lottery site and voluntarily answer polls tend to be more impulsive, optimistic, and less risk-averse than the general population.

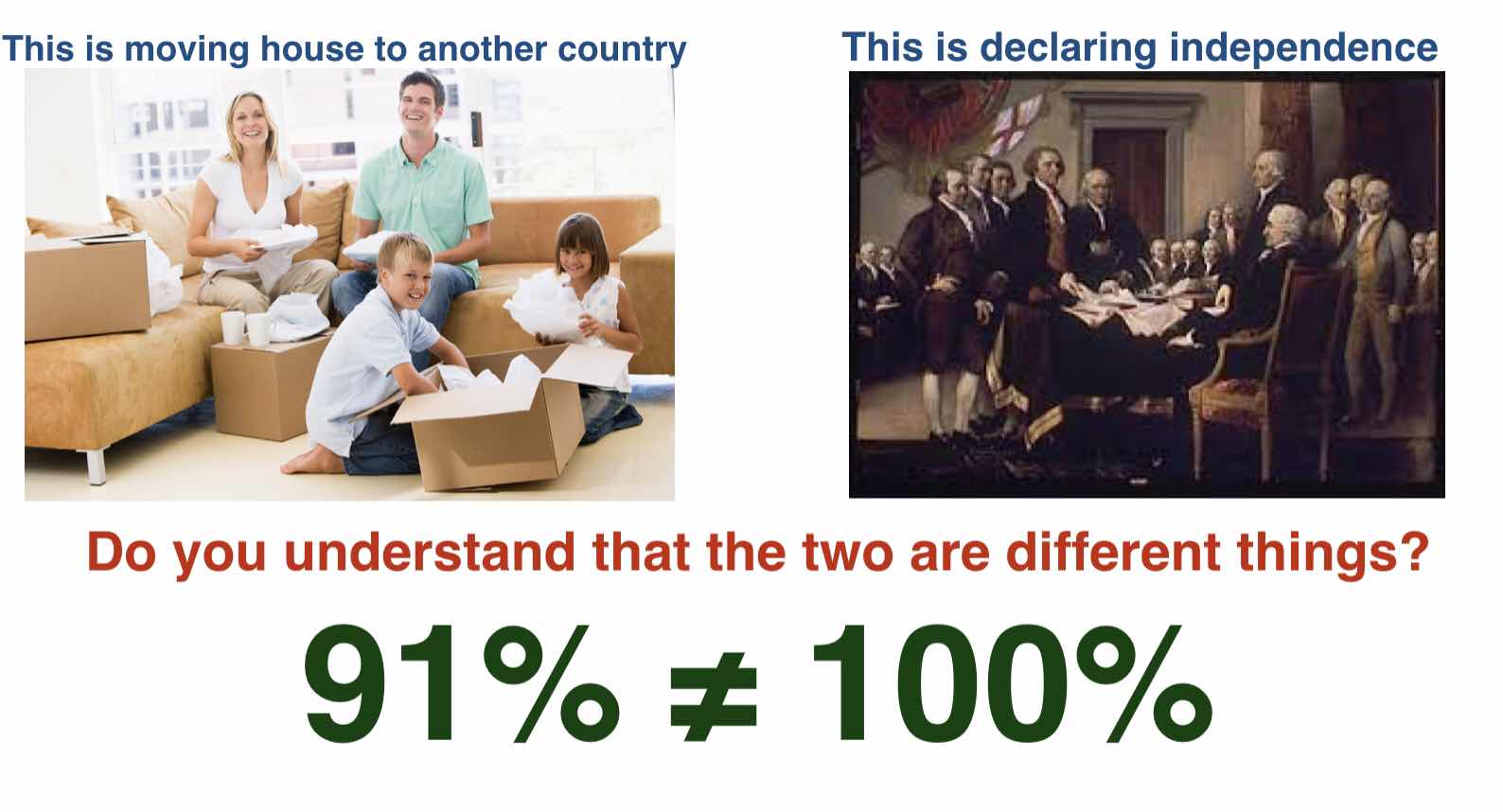

Research on lottery participants consistently shows higher impulsivity, extraversion, and a willingness to act on low-stakes opportunities. So when it comes to consequence-free hypothetical questions (e.g. “how would you vote in an independence referendum?”), these people are more likely to give a quick “Yes” without deeply weighing risks and trade-offs. The voluntary, fun, low-effort nature of my post code survey as well as an entirely hypothetical question without detail amplifies this.

YouGov, by contrast, maintains a large proprietary online panel built through advertising and partnerships. While still opt-in, it is far more controlled: they actively invite matched panelists for each survey rather than accepting whoever clicks a button on a lottery page. YouGov’s pool attracts people willing to complete longer, more regular surveys, often with a stronger civic or political interest. This produces a different kind of enthusiasm bias (politically engaged people), but one that is generally more stable and less skewed toward impulsivity.

The practical consequences are visible in the data. On Scottish independence, FON regularly shows Yes support 7–10 points higher than YouGov (e.g. ~50% Yes vs low-40s).

Interestingly through on bread-and-butter Westminster or Holyrood voting intention, however, the two pollsters converge much more closely (although FON still have a smaller pro-Green bias). This may be a herding effect, but it can equally be that the bias is particularly potent on low importance, almost whimsical, questions without consequence where snap emotional responses matter more.

Demographic weighting helps FON on observables (age, class, region), but it cannot adjust for unmeasured differences in personality, time-use, or decision-making style. Someone too busy, risk-averse, or uninterested in lotteries never enters the pool at all. This is analogous to polling only people who answer landline calls, where enthusiasm bias may have an impact, — no amount of post-stratification fully removes the skew.

FON’s method certainly delivers cheap, fast, high-response-rate polling with transparent quotas, but it sacrifices depth of representativeness.

YouGov’s panel, while imperfect and still self-selecting at the recruitment stage, starts from a broader and more deliberate base.

The result is that FON appears systematically “hotter” on certain high-salience, low-consequence issues such as a fictional indyref question. For anyone analysing independence polling (or any other highly hypothetical issue based question) this methodological difference is a major reason to treat FON numbers with considerable caution. This is a hypothesis BUT it fits the facts.

- The source of the FON respondents

- The nature of people who like to gamble

- The vague and hypothetical nature of a consequence free question which gets more and more that way as time removes us from 2014

-The differences of FON and YouGov when it comes to the indyref question

- Their herding when it comes to actual verifiable voting

Comments

Post a Comment